From bone structure to iris codes: How tech firms redefine biometric risk

From facial age estimation to iris-based identity systems, tech firms are expanding biometric data collection. This raises fresh concerns about surveillance, consent and misuse.

How biometric privacy is changing in the AI age

Until a few years ago, biometric privacy discussions were mostly limited to traditional methods like fingerprints and facial scans. However, in recent years, the conversation has become far more layered.

Modern biometric systems can now estimate a person’s age from body shape and proportions. They can also identify movement patterns, verify identity through voice traits or create secure digital codes from iris scans. Companies often argue that these methods do not identify specific people. Still, they rely on deeply personal physical characteristics.

This distinction is becoming more important as major tech firms and crypto projects increase their use of biometrics. In May 2026, Meta announced that it was expanding AI-powered age-checking systems that assess traits such as height and skeletal structure to estimate whether a user is likely to be a teenager. The company said the process deliberately avoids facial recognition because it does not link the data to a particular individual.

A separate debate surrounds World Network, previously known as Worldcoin, which uses iris-derived data for its proof-of-personhood system. The project says it does not retain original eye images in the way some critics have claimed. However, privacy advocates and regulators continue to raise concerns about risk, meaningful consent and how such sensitive data may be managed over time.

Together, these cases show that biometric privacy concerns now go far beyond the basic question of whether companies store raw photos or direct body scans.

What qualifies as biometric data

Biometric data includes any information drawn from an individual’s physical traits or behavioral patterns. Common examples include fingerprints, facial structure, iris scans, voice signatures and the way someone walks. More advanced systems can also assess typing speed, subtle hand gestures or other habitual movements.

Privacy regulations in regions like Europe and the UK treat biometric information as particularly sensitive when it is used to single out a specific person. However, the lines are becoming increasingly blurred. Modern AI tools can examine human bodies and behaviors in detail without always linking the results to a named identity.

For instance, technology that estimates a person’s age from facial features or body structure does not assign a name or legal ID. Yet it still processes deeply personal physical details. This gray area now sits at the center of many current privacy discussions.

Did you know? Unlike passwords, biometric traits cannot be easily changed. If a biometric database is compromised, users cannot simply “reset” their face or iris like a login credential.

Facial recognition vs. facial analysis

Part of the confusion stems from the tendency to group all face-related AI tools under the single label of “facial recognition,” even though these tools serve very different purposes:

- Identification answers: “Who is this individual?”

- Verification answers: “Is this the person they say they are?”

- Classification or estimation answers: “Does this person appear to fit into a group, such as adult or minor?”

Meta’s latest age-assurance system belongs to the third group. The company describes it as using AI to assess visual indicators and account activity to determine whether someone is likely underage, without attempting to identify any particular user. This technical and legal separation is significant.

Even so, many users remain uneasy. Even without attaching a name, the system can still shape how people are treated on the platform. It can influence what content they see, which features they can use or whether limits are placed on their account.

Why Meta’s age checks raise privacy questions

Meta presents the technology as a safeguard for young users. It aims to detect accounts where people may have provided a false age and move them into settings that offer greater protection.

This objective speaks to many parents and regulators as governments worldwide push social platforms to strengthen child protection measures and reliable age checks.

Nevertheless, good intentions do not automatically eliminate privacy concerns. Ongoing issues include:

- The volume and sensitivity of physical data being processed

- Whether people are genuinely informed about how the system works

- Potential inaccuracies in AI estimates

- The risk of mistakenly flagging adults

- The possibility that the tool could later expand into wider profiling or access restrictions

A tool built today for age checking could, in the future, feed into more comprehensive behavioral tracking or personalized controls. That possibility fuels skepticism around biometric systems, even when companies assure users that no direct identification is involved.

Did you know? Many biometric systems do not store raw images. Instead, they convert scans into mathematical templates or encrypted codes. However, privacy debates continue because those templates may still uniquely identify people.

How World Network turned biometric identity into a crypto issue

While Meta represents a major mainstream tech case, World Network has pushed biometric verification squarely into the cryptocurrency sector.

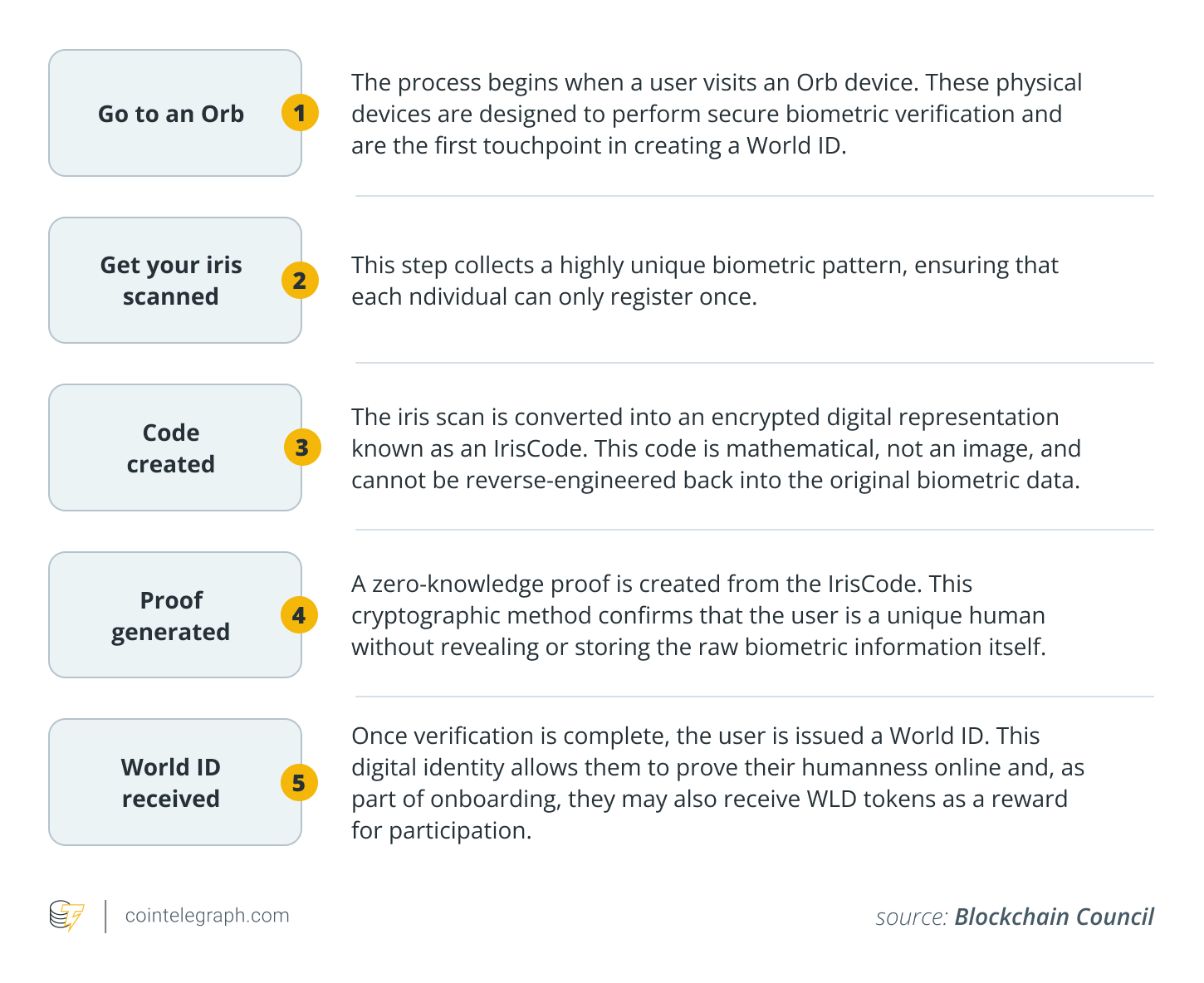

The project relies on a specialized device called the Orb, which scans the iris to create a proof-of-personhood credential. This is designed to confirm that someone is a real human rather than a bot, fake account or duplicate identity. As more AI-generated profiles and bots appear online, this kind of verification is becoming more important.

World Network maintains that its approach is privacy-first. It says the Orb processes iris scans on the device itself, deletes the original images quickly and produces only a derived code instead of storing raw biometric data. The company has also highlighted ideas such as “personal custody,” which allows users to hold certain elements locally on their devices.

Despite these assurances, regulators and privacy groups continue to raise red flags. They question whether the resulting codes and processing methods still introduce meaningful biometric risks.

This discussion reflects a broader shift in privacy thinking. The focus has moved beyond the simple question of whether raw images are stored.

Why deleted scans do not end the privacy debate

A central issue in biometric privacy debates is that deleting the raw image does not automatically remove all privacy risks.

Projects such as World stress that they do not permanently store original scans in a central biometric database. While this step reduces some threats, scrutiny has shifted to the derived outputs, including biometric templates, mathematical hashes, encrypted fragments or unique codes generated from the scan.

These derivatives can still support:

- User authentication

- Cross-check matching

- Potential long-term identity linking

- System inclusion or exclusion decisions

- Verification across different platforms

Unlike passwords or PINs, biometric traits are permanently tied to a person’s body. A compromised password can be reset, but you cannot change your iris pattern or facial geometry.

This does not mean every derived template can be reversed into the original image. However, many regulators now view these derivatives as sensitive personal data whenever they enable recognition, tracking or linkage over time.

This perspective explains why World Network has encountered regulatory pushback in various countries, even as it emphasizes immediate image deletion.

The bigger picture: Trust, genuine consent and future risks

For a growing number of people, the central issue goes beyond technical storage methods. It comes down to trust.

Common questions include:

- Exactly what data is being gathered?

- Is it truly essential for the service?

- Was the consent process clear and freely given?

- Can someone opt out without being locked out of the system?

- Could the data be repurposed later for other goals?

- What safeguards exist if the company is acquired or changes direction?

- Are there independent audits and oversight?

These concerns apply to both traditional social platforms and emerging crypto identity systems. Even well-designed privacy-protecting technologies may fail to build public confidence if users feel they lack real transparency or ongoing control as these systems develop.

Did you know? Airports around the world increasingly use biometrics for border control, allowing travelers to pass through checkpoints using face or iris scans instead of physical documents.

Why this development matters for the crypto sector

Cryptocurrency is becoming more intertwined with digital identity solutions, bot-prevention tools and fraud-prevention measures.

With the rapid growth of AI-created accounts and artificial personas, proof-of-personhood technologies are becoming increasingly appealing to exchanges, decentralized apps and online platforms.

This shift presents a clear dilemma.

On one hand, biometric verification can effectively curb Sybil attacks, reduce fake profiles and limit automated interference. On the other hand, if poorly managed, these systems risk enabling greater surveillance, unfair user exclusion or unwanted central points of control.

World Network stands out as a prominent case of this balancing act. It has tried to address the challenge of decentralized identity by relying on physical biometric enrollment through real-world hardware.

As AI-generated identities become more convincing and widespread, many other projects and platforms across the industry will likely face similar choices in the coming years.

More on the subject