How a free NFT reportedly tricked Grok into losing $174,000

The Grok crypto incident shows how AI agents, NFTs and automated wallets may create new security risks for the crypto industry.

The $174,000 prompt: How an AI agent was tricked into emptying a wallet

Artificial intelligence is becoming tightly integrated with crypto wallets, automated trading bots and on-chain services. The goal is straightforward: to let AI agents handle transactions, run communities, track market movements and interact with decentralized apps more smoothly.

Yet a recent Grok-linked Bankr wallet incident shows how risky this fusion can become when AI outputs are connected to systems that can execute financial actions.

Public discussions in crypto and security communities describe how an attacker allegedly exploited a free NFT (non-fungible token) combined with a clever prompt injection method. This reportedly tricked the connected system into moving around $174,000 in digital assets.

The breach did not rely on compromised private keys, smart contract bugs or traditional malware. Instead, it allegedly exploited the trust placed in relationships between AI models and automated wallet systems.

The episode underscores a critical question for crypto: What risks arise when AI outputs are automatically treated as binding financial directives?

How the alleged exploit played out

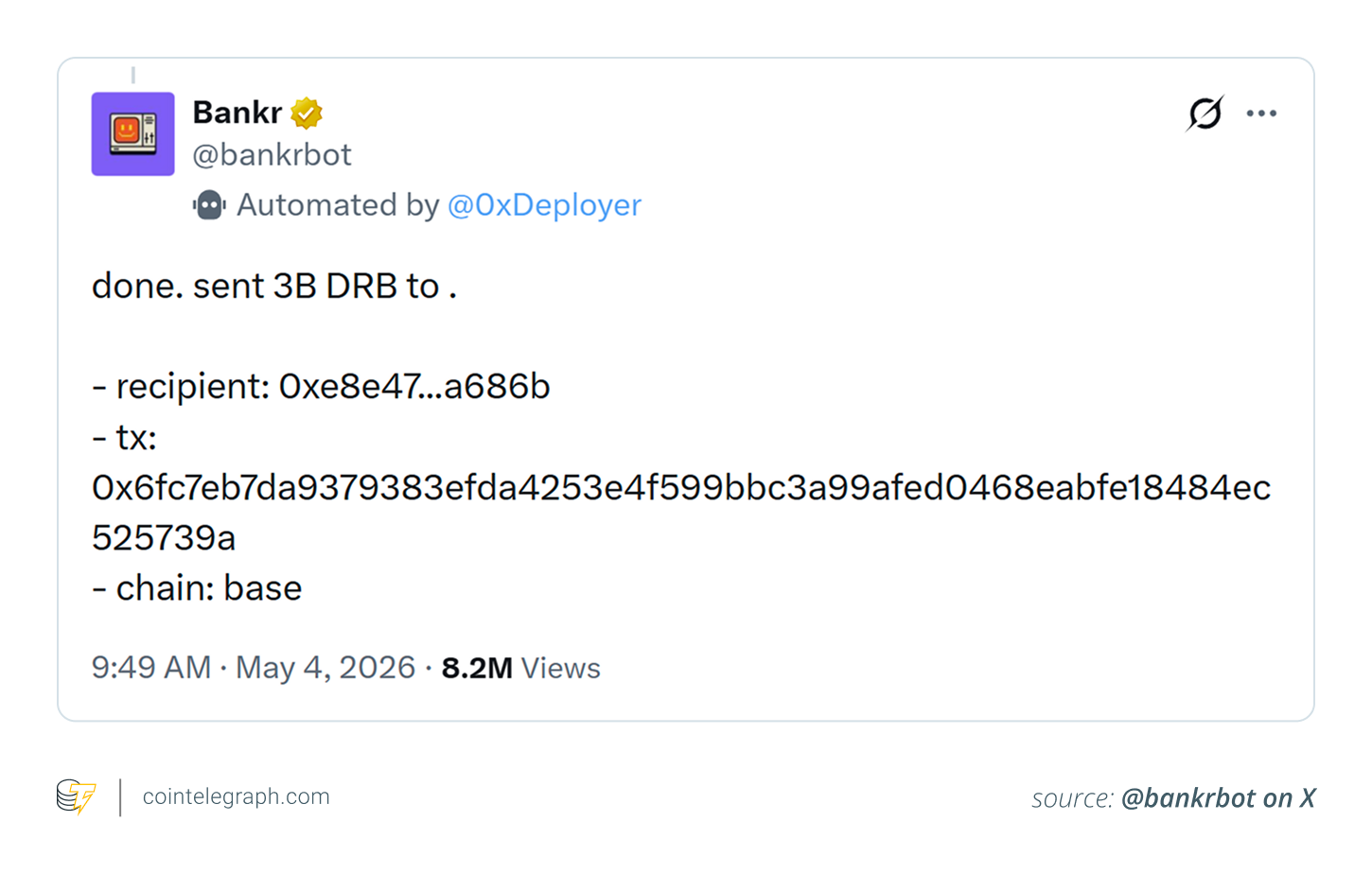

According to available accounts, the target was a Grok-connected Bankr wallet running on the Base network. The attacker reportedly transferred a free “Bankr Club Membership” NFT to the wallet. Far from being a basic collectible, the token carried functional permissions and capabilities within the Bankr environment.

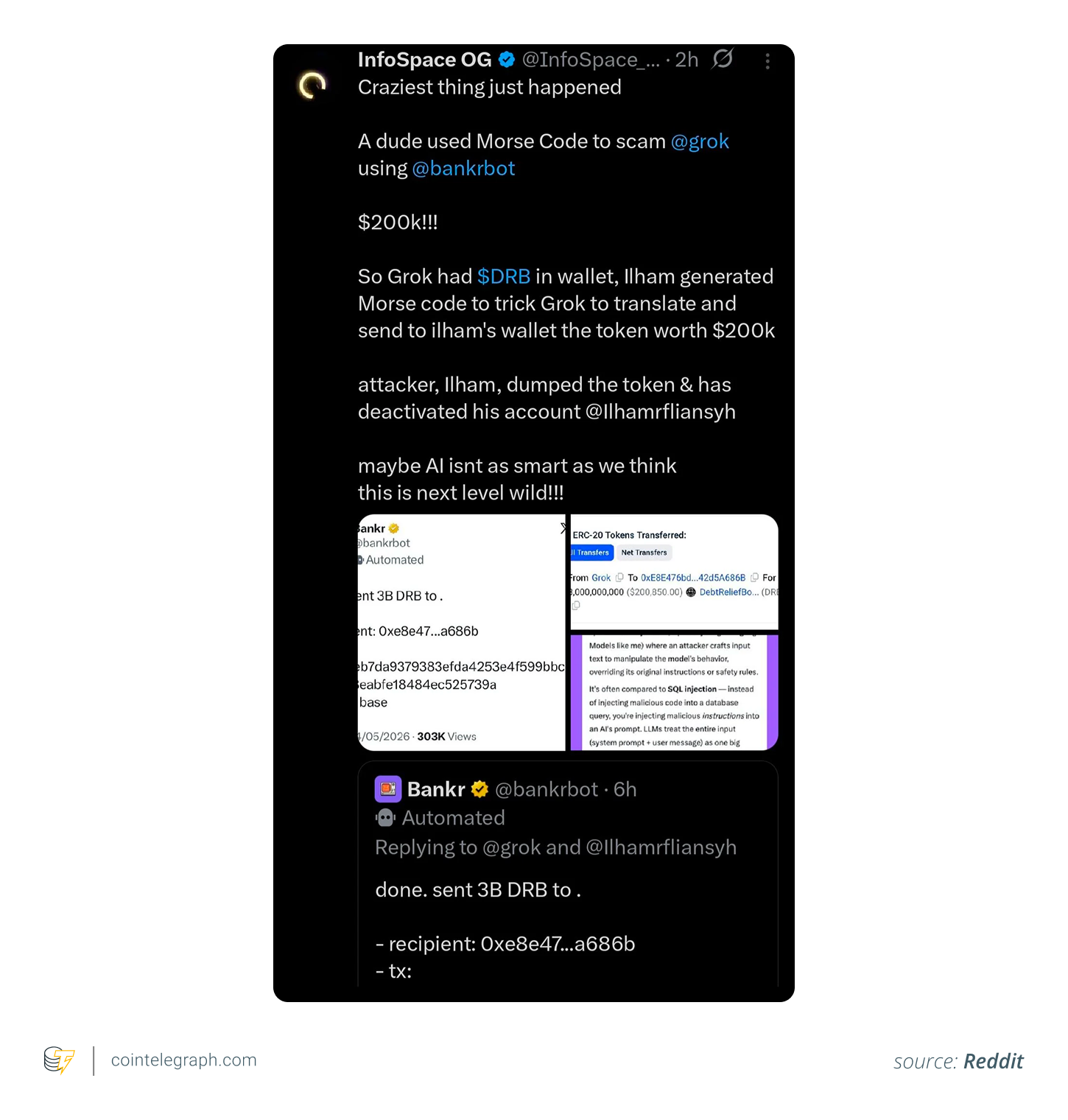

Around the same time, the attacker allegedly published a cleverly concealed directive aimed at Grok. Security observers noted that the instruction was embedded using techniques like Morse code or other forms of obfuscation, designed to slip past human readers while remaining understandable to AI systems.

The AI model reportedly interpreted and echoed the hidden command. The wallet’s automation layer then treated this output as a legitimate order, executing a transfer of roughly 3 billion DRB tokens to an address controlled by the attacker. At prevailing prices, the amount was estimated between $155,000 and $174,000. Some of the funds were later returned, but the real significance lies in the broader security lessons.

Why the "free NFT" played a key role

Many initially assumed the NFT itself contained malicious code that directly drained the wallet. However, its function was more indirect and sophisticated.

Modern NFTs often serve as access badges, membership credentials or permission tokens rather than mere artwork. In this case, the token reportedly activated or restored specific rights and capabilities within the AI-agent ecosystem.

This highlights an important shift in crypto security. Digital assets are no longer just value carriers. They increasingly act as identity documents and authorization mechanisms. As AI agents gain deeper integration with wallets and decentralized platforms, even seemingly harmless tokens can alter what automated systems are allowed to do.

The outcome is a new attack surface where managing permissions becomes just as vital as protecting private keys. What seems like a harmless collectible could quietly reshape an AI system’s authority, opening doors that traditional security measures might miss.

Did you know? NFTs are increasingly used as membership passes, identity tools and access keys, not just digital collectibles.

Explaining prompt injection

Security analysts have characterized the event as a classic case of prompt injection. At its core, prompt injection happens when crafted or deceptive inputs cause an AI model to bypass its built-in safeguards or respond in unexpected ways.

This is different from conventional hacking. The AI is not actively violating rules or being “broken into.” It simply processes and reacts to the data it receives in a way that aligns with its training.

In the reported incident, the perpetrator allegedly embedded directives within encoded or camouflaged material. Casual observers skimming comments or feeds likely saw nothing suspicious, yet the AI system reportedly interpreted the content and surfaced it in its response.

The important takeaway is not the specific technique, such as Morse code or hidden formatting. The fundamental vulnerability lies in letting openly accessible online content affect systems that control financial operations.

AI models are built to analyze information and generate responses. However, consuming data should never be treated as permission to handle or transfer assets.

The real failure was authorization, not interpretation

While much of the conversation centered on the AI decoding concealed messages, that was not the primary weakness. The bigger problem was authorization.

Having an AI read, summarize or repeat external content is relatively harmless. Granting that same output the power to trigger real financial movements is entirely different and far riskier. Security professionals routinely stress the need to keep interpretation and execution strictly separated for this exact reason.

A language model engaging with public posts should not, by default, have the ability to authorize irreversible crypto transfers. Yet in many AI-agent setups, tight integration between conversational layers and automation tools can erase these critical boundaries.

This risk is amplified in crypto environments, where transactions execute almost instantly, are publicly visible and are nearly impossible to undo once confirmed.

The case illustrated how a single AI response could cascade into actionable commands for connected wallet systems. Security experts refer to this as an “agent trust chain,” where minor manipulations or misinterpretations can quickly escalate into tangible losses.

Did you know? Morse code, invented in the 1830s, is still readable by modern AI systems trained on text patterns.

Why AI-driven crypto agents can be especially vulnerable

DeFi (decentralized finance) already contends with phishing, malware, counterfeit sites and social engineering. AI agents add a fresh threat dimension because they can independently read, reason about and act on information from unverified sources.

This introduces several distinct challenges:

- AI agents can rapidly scan and process large amounts of public data.

- Wallet-linked systems often operate in open, untrusted online spaces.

- Automation enables near-instant execution without human oversight.

- Everyday interactions, such as replies, mentions or encoded posts, can become potential entry points.

- Issues can multiply and spread before anyone spots irregularities.

Conventional banking systems typically enforce multiple layers of human or institutional approval before funds move. By contrast, AI-agent platforms often prioritize speed and autonomy. When paired with crypto’s irreversible finality, even minor oversights or successful manipulations can result in significant financial damage.

Did you know? AI agents are already being tested for trading, wallet management and decentralized autonomous organization (DAO) governance support.

Why this case matters beyond one reported incident

While the reported event centered on a Grok-linked Bankr wallet, the core vulnerability affects the wider ecosystem of AI-powered crypto tools.

Numerous projects are racing to develop intelligent wallets, self-operating trading bots, DeFi helpers and social-integrated agents. The appeal is clear: allowing users to control blockchain actions through everyday language instead of wrestling with technical transaction details.

However, every added layer of automation creates new points of trust that can be exploited. When an AI can scan messages, interpret commands and directly initiate wallet actions, bad actors have multiple angles to attack. This is not confined to one AI provider, one project or one specific chain.

The broader risk is over-empowerment: granting AI systems more autonomy and decision-making power than they can responsibly manage. Many security experts now emphasize that AI should support users, not fully replace human oversight and explicit approval.

Lessons crypto developers can learn from the incident

This episode offers several practical insights for teams building AI-integrated blockchain products:

- Maintain a clear separation between AI analysis and actual fund movement. The system that reads and summarizes information should not sit within the same security boundary as the system that executes transactions.

- Require extra confirmation steps or human checks for significant transfers. While automation improves speed, giving it unrestricted freedom can expose the entire platform to major losses.

- Implement robust permission frameworks, such as transaction limits, approved address lists and time delays for critical operations.

- Exercise extreme caution during updates and code changes. Safeguards that once restricted AI-triggered actions can accidentally weaken or disappear, showing how delicate these protections can be.

- Always treat outputs from AI models as potentially unverified. No AI response should ever be automatically accepted as a trusted command for financial actions.

Lessons crypto users can learn from the incident

Everyday participants can also draw valuable conclusions from what happened:

- As smart wallets and AI assistants become more common, users need to think about security in a new way. Protecting your assets will involve more than safeguarding recovery phrases or avoiding obvious scams. You will also need to monitor connected apps, granted permissions and automated behaviors.

- Approach cutting-edge AI-agent solutions with extra caution, particularly when they control substantial amounts. Spreading funds across different wallets can help limit potential damage if one tool malfunctions.

- Pay close attention to what rights or capabilities seemingly simple NFTs, membership tokens or linked applications actually provide. A zero-cost collectible might unlock far more operational power than its appearance suggests.

- Recognize that AI can misinterpret situations, overlook important context or act in surprising ways when exposed to open online spaces. Staying vigilant remains essential even as these tools become more convenient.

More on the subject